Research

Our lab conducts research at the intersection of security, privacy, machine learning, and human-computer interaction. Our work spans three major research directions.

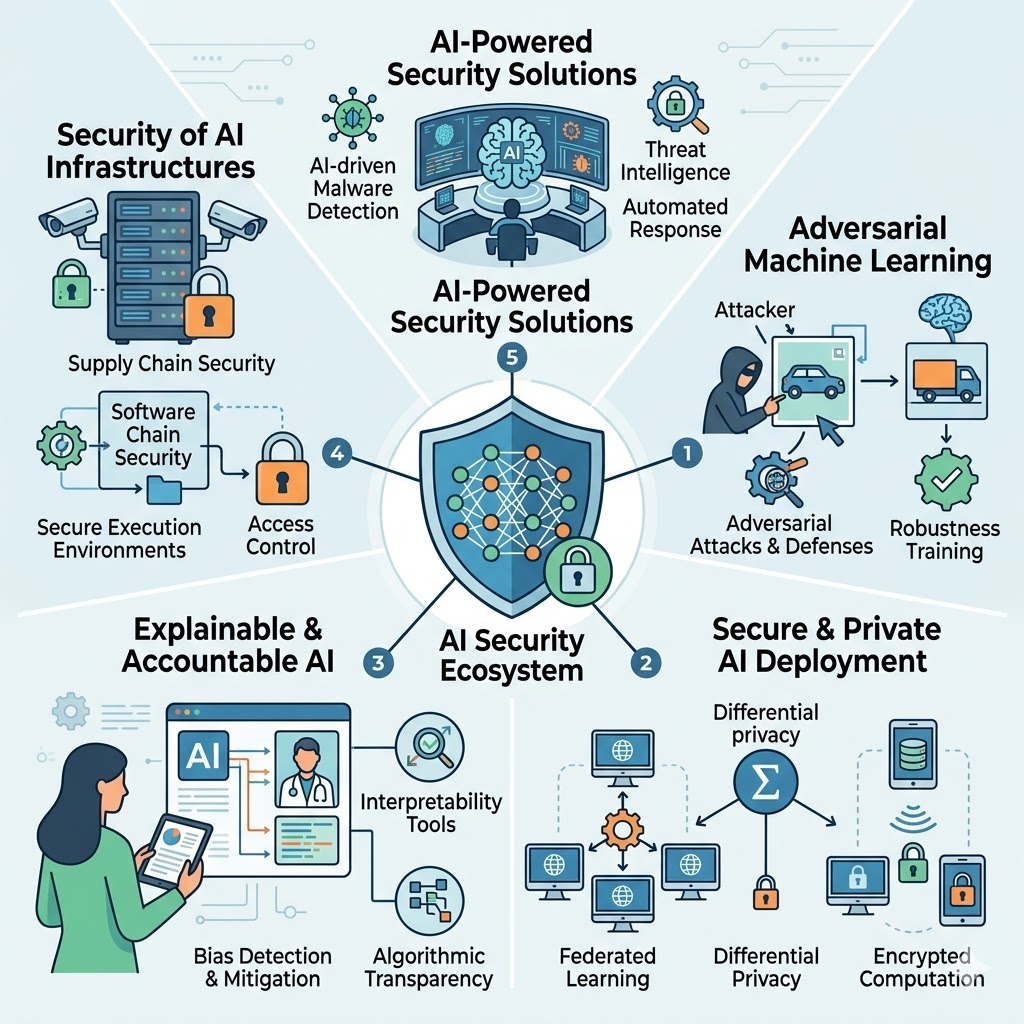

AI Security

EIA: Environmental Injection Attack on Generalist Web Agents for Privacy Leakage ICLR 2025 Details →

BadMerging: Backdoor Attacks Against Model Merging CCS 2024 Details →

Chimera: Creating Digitally Signed Fake Photos by Fooling Image Recapture and Deepfake Detectors USENIX Security 2025 Details →

SoK: Towards Effective Automated Vulnerability Repair USENIX Security 2025 Details →

Conditional Supervised Contrastive Learning for Fair Text Classification EMNLP Findings 2022 Details →

Model-Targeted Poisoning Attacks with Provable Convergence ICML 2021 Details →

Hybrid Batch Attacks: Finding Black-box Adversarial Examples with Limited Queries USENIX Security 2020 Details →

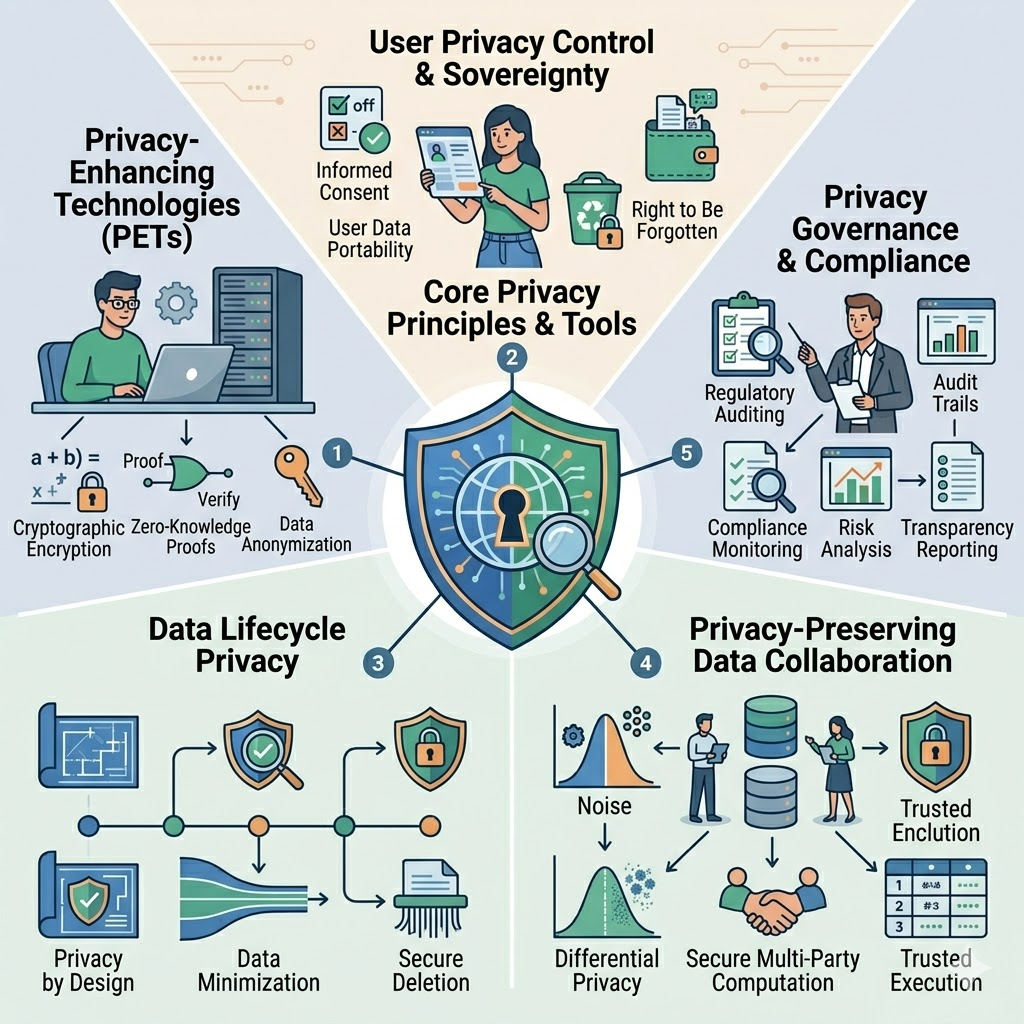

Data Privacy

Breaking the Illusion: Automated Reasoning of GDPR Consent Violations IEEE S&P (Oakland) 2026 Details →

Location-Enhanced Information Flow Analysis for Smart Home Automations PETS 2026 Details →

Free WiFi is not ultimately free: Privacy Perceptions of Users regarding City-wide WiFi Services PETS 2025 Details →

Where have you been? A Study of Privacy Risk for Point-of-Interest Recommendation SIGKDD 2024 Details →

CHKPLUG: Checking GDPR Compliance of WordPress Plugins via Cross-language Code Property Graph NDSS 2023 Details →

SenRev: Measurement of Personal Information Disclosure in Online Health Communities PETS 2023 Details →

Exploring Smart Commercial Building Occupants Perceptions and Notification Preferences of IoT Data Collection EuroS&P 2023 Details →

Birthday, Name and Bifacial-security: Understanding Passwords of Chinese Web Users USENIX Security 2019 Details →

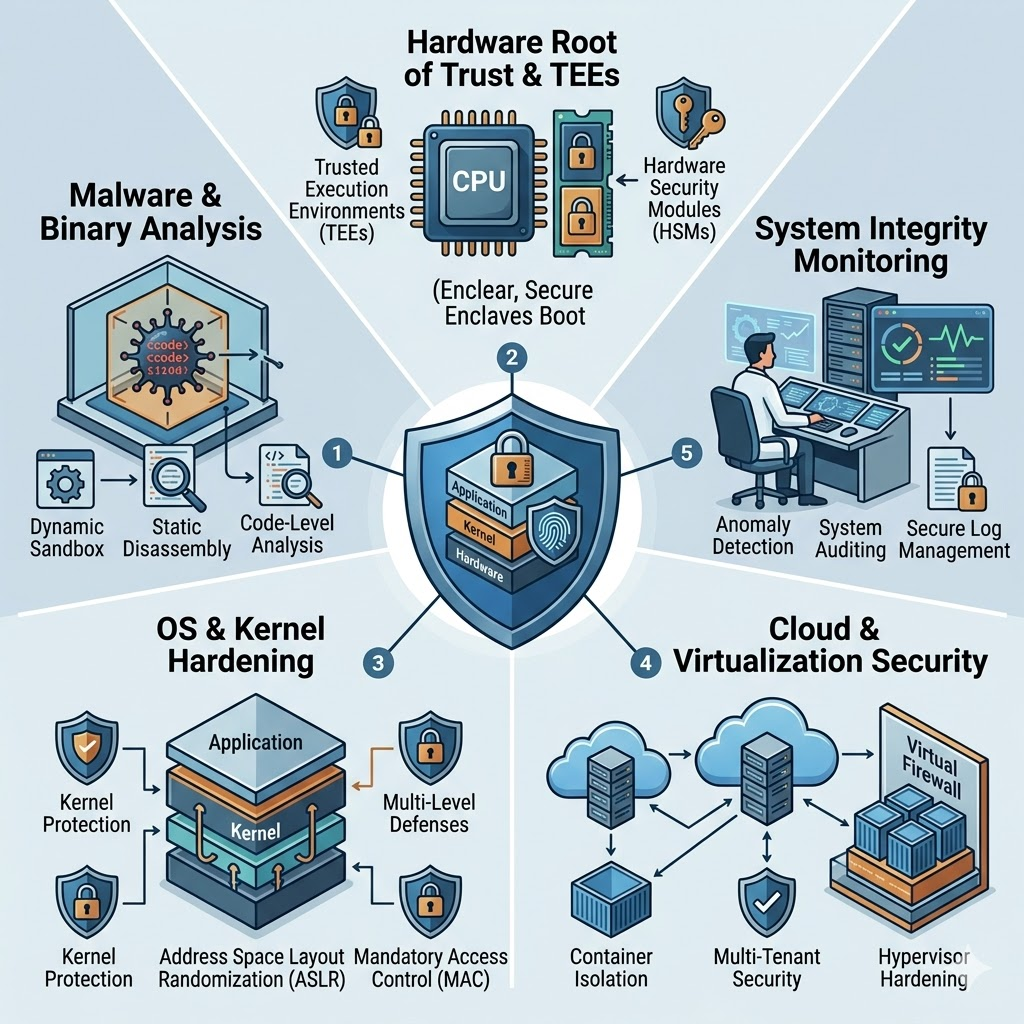

System Security

From Perception to Protection: A Developer-Centered Study of Security and Privacy Threats in Extended Reality (XR) NDSS 2026 Details →

Waltzz: WebAssembly Runtime Fuzzing with Stack-Invariant Transformation USENIX Security 2025 Details →

AuthSaber: Automated Safety Verification of OpenID Connect Programs CCS 2024 Details →

Alexa, is the skill always safe? Uncover Lenient Skill Vetting Process and Protect User Privacy at Run Time ICSE 2024 Details →

Towards Real-time Voice Interaction Data Collection Monitoring and Ambient Light Privacy Notification for Voice-controlled Services USEC 2024 Details →

Towards Usable Security Analysis Tools for Trigger-Action Programming SOUPS 2023 Details →

Your Microphone Array Retains Your Identity: A Robust Voice Liveness Detection System for Smart Speakers USENIX Security 2022 Details →

SkillBot: Identifying Risky Content for Children in Alexa Skills ACM TOIT 2022 Details →

VerHealth: Vetting Medical Voice Applications through Policy Enforcement Ubicomp 2021 Details →

Read Between the Lines: An Empirical Measurement of Sensitive Applications of Voice Personal Assistant Systems WWW 2020 Details →

TKPERM: Cross-platform Permission Knowledge Transfer to Detect Overprivileged Third-party Applications NDSS 2020 Details →

OAuthLint: An Empirical Study on OAuth Bugs in Android Applications ASE 2019 Details →

Poster: Attack the Dedicated Short-Range Communication for Connected Vehicles Oakland 2019 Details →

Side Channel Attacks in GPU-Virtualization-Based Computation-Offload Systems SafeThings 2019 Details →